Moeraki, Nugget Point en Curio Bay

Vorige: Kaikoura, Waipara en Lake Tekapo

Al een tijdje proberen we een echte rustdag in te lassen, en vandaag, zaterdag 28 november, is dat eindelijk gelukt. We zitten in Te Anau, een vrij toeristisch stadje aan een groot meer aan de oostkant van Fiordland. We hebben vandaag vrijwel niets gedaan, om Rowan ook eens een dagje rust te gunnen. We hebben een wandelingetje door het dorp (1900 inwoners) gemaakt en Rowan heeft een beetje gefietst en in de speeltuin gespeeld. Vandaag is ook de eerste dag in onze hele vakantie dat het slecht weer is; tot nu toe hebben we het slechte weer kunnen omzeilen. Gelukkig zijn de vooruitzichten voor de komende dagen in het westen wat beter.

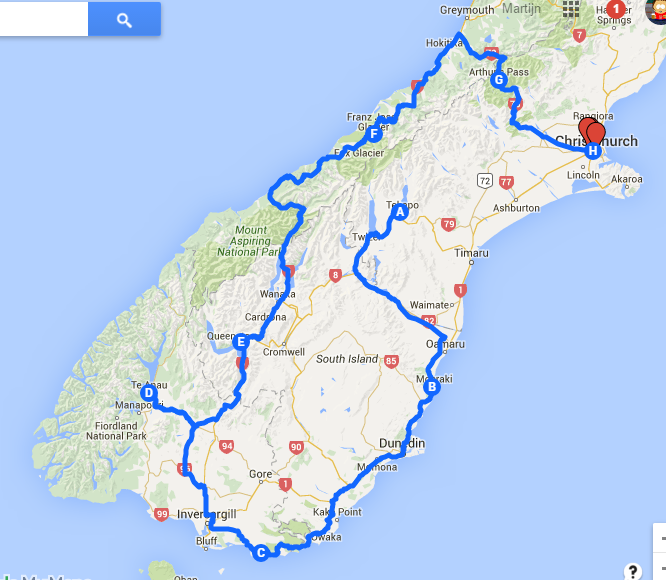

Dinsdag 24 november hebben we Lake Tekapo verlaten en zijn we via de toeristische route terug naar de oostkust gereden. Onderweg zijn we even gestopt bij Lake Pukaki, niet ver van Tekapo, wat dezelfde soort magische uitstraling heeft. Verderop in de route zat nog een bezienswaardigheid, namelijk de Clay Cliffs van Omarama. Zonder goed te weten wat we konden verwachten zijn we een 4 km lange gravelweg afgereden en zijn een stuk gaan wandelen. Van een afstandje zag het landschap er al best indrukwekkend uit, maar je kon ook helemaal tussen de rotsen door lopen en een eind naar boven klimmen. Rowan heeft ook het hele stuk gelopen (de “100m” op het bord was toch wel een optimistische schatting!) en zelfs een heel eind naar boven geklommen. Na deze inspanning hebben we nog een flink stuk gereden, en zijn we gestopt in een plaatsje genaamd Hampden, aan de kust, net boven het iets bekendere Moeraki.

Daar stonden we op een klein campinkje, vlakbij het strand, met absurd kleine plekken maar wel met een grote speelweide met trampoline, waar Rowan zich goed kon vermaken. Op ca. 10 km van deze camping is ook een plek waar je geeloogpinguïns kunt zien, maar na lang wikken en wegen en overleg met Rowan hebben we besloten om maar niet meer met de camper op pad te gaan deze middag. Gelukkig was er een eend met jonkies op de camping, dus hebben we toch nog wat wildlife kunnen zien 😉

Woensdag zijn we van de camping vertrokken en onze dag begonnen met een bezoek aan de Moeraki Boulders, een verzameling stenen op het strand, die door hun vorm en afmeting een bezienswaardigheid vormen. Daarna zijn we verder langs de kust naar Dunedin gereden. Daar zijn we kort gestopt om een fotootje van het station te maken, maar toen snel weer verder. De bedoeling was, dat we vandaag maar een kort ritje zouden maken, en we hadden een camping uitgezocht in Taieri Mouth, net onder Dunedin. Bij aankomst op de camping, waar we overigens geen levende zielen hebben aangetroffen, schrokken we een beetje van hoe het er allemaal uitzag (alhoewel het waarschijnlijk best meegevallen zou zijn) en besloten we om een andere camping uit te zoeken. Tijdens een picknick aan het water hebben we heel wat opties de revue laten passeren, en uiteindelijk toch gekozen voor een camping 75 km verderop. Dat was veel verder dan we eigenlijk wilden, maar campings met wat meer faciliteiten zijn hier niet zo dik gezaaid.

Zo kwamen we terecht in Kaka Point. Ook op deze camping was geen kip te bekennen (geen beheerders en geen andere gasten), maar het sanitair en de keuken (en de belofte van gratis WiFi 😉 ) zagen er redelijk uit, dus we besloten te blijven. Rond 17 uur kwam er een beheerder opdagen, net op het moment dat wij de camping weer even wilden verlaten voor een bezoek aan Nugget Point.

Op Nugget Point staat een oude vuurtoren en er woont een kleine kolonie geeloogpinguïns, waar we dit keer onze kans wilden wagen. Naar de vuurtoren was het nog een aardige wandeling, die Rowan gelukkig ook kon doen. Het uitzicht vanaf de vuurtoren was in ieder geval de moeite waard. Inmiddels was het al best laat en we waren hier eigenlijk om pinguïns te kijken. Ter achtergrondinformatie: geeloogpinguïns zijn een zeldzame soort (5000 exemplaren), die alleen in Nieuw-Zeeland voorkomt. Ze hebben een nest in de dichte begroeiing aan het strand, en als de eieren uitkomen (in november) dan gaan de dieren dagelijks de zee in, om eind van de middag terug te komen om de kuikens te voeren. Als ze aan land komen, kun je ze over het strand zien waggelen, op weg terug naar het nest. Op Nugget Point wonen 10 pinguïnkoppels. Pinguïns kijken betekent dus vooral met heel veel geduld naar het strand en het water staren in de hoop dat er eentje aan land komt. Van andere bezoekers begrepen we dat er vlak voor onze komst een aantal aan land waren gekomen, maar in de drie kwartier dat wij er waren, was er helaas niets te zien.

Omdat Kaka Point eigenlijk niet meer was dan een tussenstop op weg naar Curio Bay, waar naast geeloogpinguïns ook zeeleeuwen, dolfijnen en andere dieren te zien zijn, zijn we donderdagochtend weer gaan rijden. Gelukkig was het naar Curio Bay maar zo'n 90 km en was de route door The Catlins prachtig.

Curio Bay is een afgelegen plek en de enige camping daar ligt op een landtong, waardoor je bijna alle kanten op kunt uitkijken over de oceaan. Aan de ene kant ligt een zandstrand, waar zeeleeuwen wonen, en aan de andere kant zijn kliffen, van waar je het geweld van het water tegen de rotsen van heel dichtbij kunt meemaken en waar je ook mooi kunt zitten om dolfijnen en ander zeeleven te spotten. Op 150m van de camping ligt het strand waar de geeloogpinguïns huizen. In tegenstelling tot bij Nugget Point, waar je in deze tijd niet op het strand mag komen om de pinguïns niet te storen, kun je in Curio Bay gewoon het strand op, mits je minimaal 10 meter afstand tot de dieren bewaart.

Om dit keer niet te laat te komen, zijn we rond half 5 naar het strand gegaan. In de uren daarna vulde het strand zich met flink wat mensen, sommigen zelfs met stoeltjes en flessen wijn. Met de kennis die we dachten te hebben, over dat de pinguïns niet aan land komen bij onraad en dat ze zeer schuw zijn, hebben we ons hogelijk verbaasd over hoe dat hier ging. Het hele strand was bezaaid met mensen en als ik een pinguïn was, bleef ik in zee!

En inderdaad, na 2,5 uur nog geen pinguïn gezien. Rowan en Marijke waren inmiddels terug gegaan naar de camping om te eten, en rond 19:15 hield ook Martijn het voor gezien. Toen Rowan in bed lag, is Martijn teruggegaan naar het strand, waar op dat moment net een pinguïn de weg over het strand aan het afleggen was. Gevolgd door een leger roddelpers, zo leek het, moest het beestje zijn weg vinden terug naar zijn nest. Uiteindelijk verdween hij in de dichte begroeiing boven het strand, en 10 minuten later waren op een enkeling na alle mensen vertrokken. Met Martijn terug op de camping kon ook Marijke nog even terug naar het strand, en ook voor haar was er een pinguïn aardig genoeg om zich te laten zien. Doel bereikt 😉

De Curio Bay Camping Ground is overigens wel een plek van uitersten. De fantastische ligging staat in schril contrast met de rest van de ervaring. Vooral het sanitair (als je dat zo mag noemen) was dramatisch. Nou heb ik in mijn leven heel wat campingdouches gezien, en vaak laten ze wat te wensen over, maar niet eerder had ik een douche waar GEEN water uit kwam. Dat was wel de kortste douche ooit. Helaas ging de receptie pas om 11 uur open, dus een mogelijkheid om mijn 2 dollar terug te vragen was er ook niet.

Snel weer op weg, en via Invercargill zijn we naar Te Anau gereden, waar we gisteren dus zijn aangekomen. Morgen gaan we hier in de omgeving nog wat leuks doen, en dan rijden we door naar Queenstown. Tot de volgende keer!

Volgende: Te land, ter zee en in de lucht!